Here’s my guide to alternative search engines that discusses all the different search engines and how they differ under the hood. I wrote it to be understandable to everyone not just tech nerds.

Here’s my guide to alternative search engines that discusses all the different search engines and how they differ under the hood. I wrote it to be understandable to everyone not just tech nerds.

Ads aren’t a thing in my life. On the off day I have to visit someone who lives with ads and suffer through one or two I tough it out, or look at my phone, or do something different.

I don’t watch live TV. I dont pay for any subscription services except phone service and internet data. I watch YouTube content that has the ads stripped out. I download youtube videos that get often rewatched to hard drive. For movies I buy DVD that can have the drm stripped out.

I play good video games preferably drm free (steam is the one service I can’t really give up easy, but it has offline mode and the deck so praise gaben!). I read e-books that are drm free. I have a mp3 player downloaded with all my music drm free.

The better question is, why are you willing to live with ads at all? Assuming you are in control of your living situation and have the power change whats shown on tv or played through speakers.

Why would you tolerate being constantly bombarded with manipulative messaging by companies, political canpaigns, and all the other powerful groups who want to affect he masses for their benefit?

Why is it so hard just say no? To give up the forms of toxic entertainment delivery? Why can’t you sacrifice ease and convinence and familiarity to regain some control overhow your attention is spent during free time?

If you like something, buy it and really take the steps to own it physically.

The digital manifestation of the ghost in the machine. It likes playing with the bits that line occupies when you aren’t looking. Don’t touch its line.

I agree that oil capsules are the way to go for dosing precision and general healthiness. I like oil caps the most out of all edibles simply because you can lock in a approximate amount needed to get you medicated. Not requiring further cooking or excess calories is a bonus.

To answer your question about dosing vapor, I can give some insight as a vaping nerd.

Just to be clear I mean dry herb vaping where the raw cannabis flower is baked at a temperature hot enough to vaporize the plant oil with all the good cannabinoids but not hot enough to burn or combust the flower. This method is much cleaner than combustion smoking while avoiding the possible synthetic additives in cartridge vapes.

When it comes to vaping theres two paths users tend to head down. One common path is that you just quit combustion smoking. You miss it and want to emulate the experience of smoking as closely as possible.

Big milky vapor clouds filling your regular sized bongs and exiting your lungs in a huge rip. This path leads towards the natural conclusion of expensive ball vapes and can easily burn through zips a week. You don’t care because you can afford it or grow your own.

More experienced vapor heads realize another path. It turns out you don’t actually need all that much vapor to get baked, especially with good flower or basic tolerance management.

So you go for maximizing your herb efficency. Try to get every bit of vapor out of a very small amount of herb.

This is where some people start to actually care about dosing. Exactly how much vapor does .05 g worth of bud produce? Exactly how many .Xg’s does it take to get you where you need to be?

This path of microdose vaping is most commonly followed by half bowl dynavap users, but there are many microdosing options.

Here are three different bowl sized.

I can’t tell you exact numbers but spitballing the biggest bowl probably holds .15-.20g, the middle one holds .10g and the teeny tiny one .05gs. How these get used depends on flower quality.

If I’m vaporizing top tier ganja Its easy to get medicated and I want to stretch it out with the small or middle bowl sizes. If I’m vaporizing lots of cheap mid or shake I’m going for the big bowl.

It also somewhat depends on if I’m wanting to run the vapor through a water piece that day. The open air of the big bowl makes pulling through water much easier on the lungs.

Hey entropicdrift good question. Those kinds of lanterns are expensive and complicated electronics with a definitive life expectancy. The lantern you linked is over 20$ for a single unit.

Most of those kinds of lanterns use a non replaceable rechargeable lithium battery built inside it that will only last about 500 charge cycles give or take before degrading. That is, if you are lucky and one of the other cheap mass produced quickly assembled electrical components doesn’t fry first.

In the long term I deem its more cost efficient to take a page from the home lighting industry. Simply create a light fixture with easily and cheaply replaceable bulbs. A pack of 4 12v-24v bulbs cost less than that lantern and I like the warm lighting.

Its also a simple matter to convert any lamp fixture into one that can interface with my power system. Cut off the AC plug and replace it with a male cigarette plug. The trick is knowing thst you have to buy the right kinds of bulbs.

So when the 12v led bulbs do eventually burn out its a cheap single 5$ bulb that and a minute to replace it. I would rather put a broken bulb than yet another expensive lantern in the landfill.

Apotential benefit if I choose to power it with usbc-pd instead is variable dimmable lighting based on voltage level if the bulb is 12-24v.

Hey there panicnow! I would be happy to help give some input. It is better to avoid firing up the AC inverter whenever possible. If you have a car travel adapter for your devices that plug into the jackeries cigarette plug port that would be better. If you absolutely need more usbc-pd ports for your devices, there is a way to do that given your jackary has one or two of those circular barrel plug outputs that output 12v. Most powersttions should have one or two of them.

If you have one of those barrel plug inputs youre in luck. Go on amazon and buy one of these to turn those jacks into car cigarette plug inputs.

Then get a really nice usbc-pd car charger. I don’t actually have one but I like anker and trust their 100w pd charger would be high quality. You can go cheaper if you only need 65w or lower.

Thanks. Lighting has been an ongoing puzzle I’m figuring out. I originally went with rechargeable Luci light it was really nice warm bright lighting but expensive and failed within a season. Currently I’m using a cheap 5v plastic led light bulb that plugs into regular usba slot. Its enough to see what you are doing comfortably. But really the average person whos used to house bulbs including me wants the luxury of bright lighting. For now I’ve been firing up the AC inverter to run a nice lamp. However I have been considering making my own 12v light fixture with 12v e26 bulbs that plugs into either car cig plug or usbc-pd.

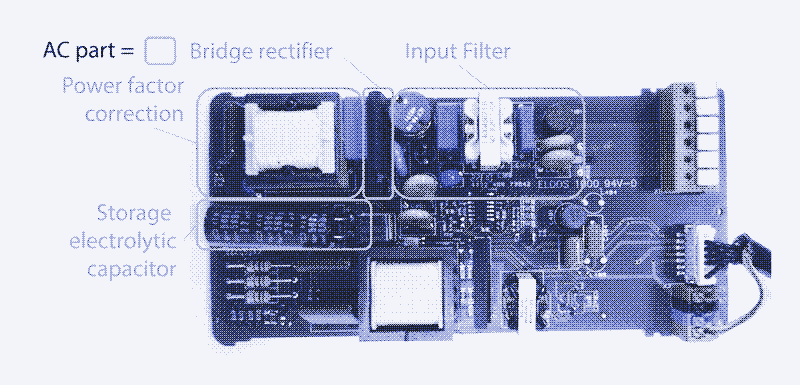

In this picture is marked all the parts of an LED circuit that convert AC Into DC. It takes up about 40% of the board. Its much easier to power LEDs directly.

One of DCs main issues is transmission distance. Its hard to say for your case without details but its a good possibility. If you have a volt meter and know how to use it check the voltage at the start of the run and compare it to the end of the run and see how much the voltage has dropped. If your trying to push 12v over 20-30ft I would say theres a good chance of it being too little voltage over too far a length. Wire diameter is also a factor if its very small gauge wiring.

Im happy to explain pastermil. So first off let’s talk power.

Electrical Power Systems

Most off-grid electrical systems have a few major components.

A device that generates electrical energy

A battery that stores excess electrical energy for later

A charge controller which regulates the incoming raw electrical power from the generator as it charges up the battery, and smooths out the battery energy output

A power distribution interface which allows for connecting appliances to the batteries in a safe standardized way.

My particular electric system has a 200w 28v solar panel for power generation, two 20ah lifepo4 batteries connected to double capacitance, and the charge controller doubles as a very basic interface with two usba slots and a car cigarette port.

AC vs DC

Now let’s talk about AC and DC. Theres essentially two kinds of electrical power people deal with.

The difference between Alternating Current and Direct Current is in the way the power flows. Direct current moves in a straight path. Alternating current moves power back and forth in three perfectly spaced cycles.

AC The one most people are more familiar with is AC power. it comes to your home from power plants through power lines and transformer boxes. You move around extension cords and plug the three prong outlets into a wall.

Alternating Current (three phase) power is very easy to transmit long distance however its very high voltage. So only certain power hungry devices like kitchen appliances, washing machines, dryers and AC compressors use it directly. Most of your consumer home devices need to convert AV power down into more manageable DC power.

DC Offgrid electrical systems with batteries are Direct Current by nature. All your power comes from the battery banks. The power moves straight from battery terminal negative to positive. It flows right through your appliances in one way out the other.

The battery banks tend to be arranged into 12v, 24v, or 48v depending on the systems power draw and transmission needs.

The popular standards for delivering direct current are:

5v 2.4a usb (15 watts)

12v 10a car cigarette plugs (120 watts, can be rated to supply 24v fused 15a I believe though not common at all)

circular dc barrel plug connectors, the most common size is 5.5mmx2.5mm but there are dozens if not hundreds of slightly different barrel plugs. Part of what makes usb so great is reducing arbitrary manufacturing complexity like this.

usbc-pd various voltages depending on charger, cable, and device at up to 100w for current protocol.

solar quick connects tend to be for connecting and transmitting high voltage DC power to charge controllers and power banks. Its worth mentioning but not that relevant to what were talking about.

Most consumer devices in your home dont actually use wall outlet AC power directly, it uses wall power thats been converted and stepped down to DC power.

Desktop computer power supplies, Laptops, monitors, vaporizers, led lights, DVD players, audio speakers, your phone. everything that can powered by usb and batteries. Everything that has barrel plug inputs and power bricks plugging into it.

If you look closely on the power bricks plugged into the appliance you’ll see that it has an input and output voltage rating. The input tends to be 120vac here in america 240v over the pond, and the output tends to be either 5v, 9v, 12v, 15v or 20v DC usually up to 5 amps.

Device vs Voltage Examples

Laptops and computer monitors tend to be 20v, fast charging smart phones and the Nintendo switch docked are 15v, very bright home LED lights can be bought that are powered at 12v directly, the ps2 could be powered with 9v, and most usb devices charge at standard 5v. Would you like to guess which voltage profiles the USBC-PD protocol is capable of? Its all of them.

Energy Conversion Efficency Losses

Now let’s discuss energy efficiency. Converting from AC to DC eats up some of your power. So does converting from DC to AC. And its not small losses either, each time you convert its about a 15% total loss in efficency.

This loss through conversion doesn’t matter when you pay cents on a kilowatt and have unlimited power at the tap. It adds up very quickly when you have a limited power supply and every watt hour counts.

Let’s say I want to power a laptop on my offgrid DC system and my only means of powering it is the AC power brick cable that it came with. I would need to:

Add these up and you get 30-40% of your power eaten away by this needless double converting. Wouldnt it be really nice if we could convert the battery DC voltage directly to the appliance DC voltage without those power hungry inverters and transformers?

What DC-to-DC Converters Are

Thats where dc to dc converters come in. They can convert one DC voltage to another. They still introduce efficency loss but way way less only 10% total.

Traditionally you would hope your device had a commercially available 3rd party travel adapter for 12v car batteries. The dc to dc converter is built in and uses car plug.

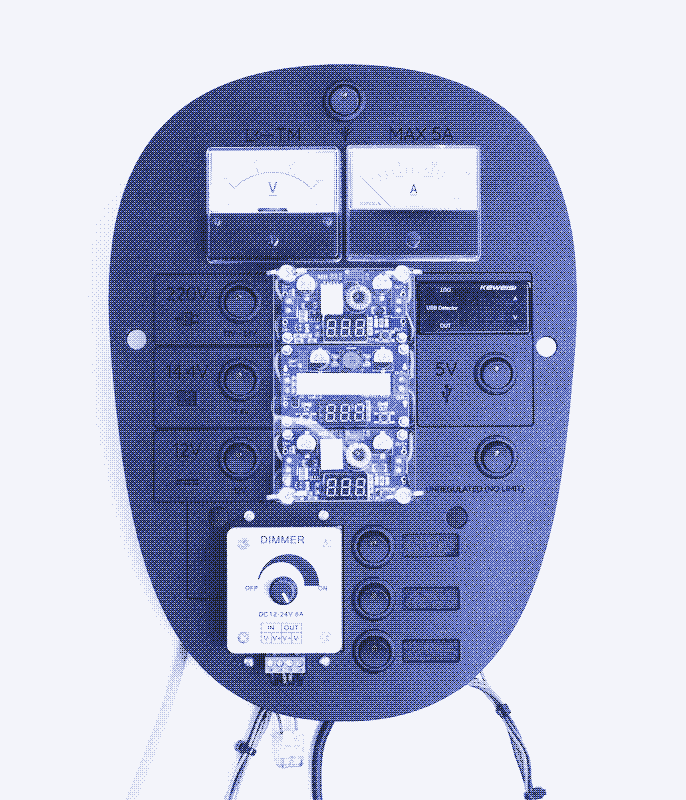

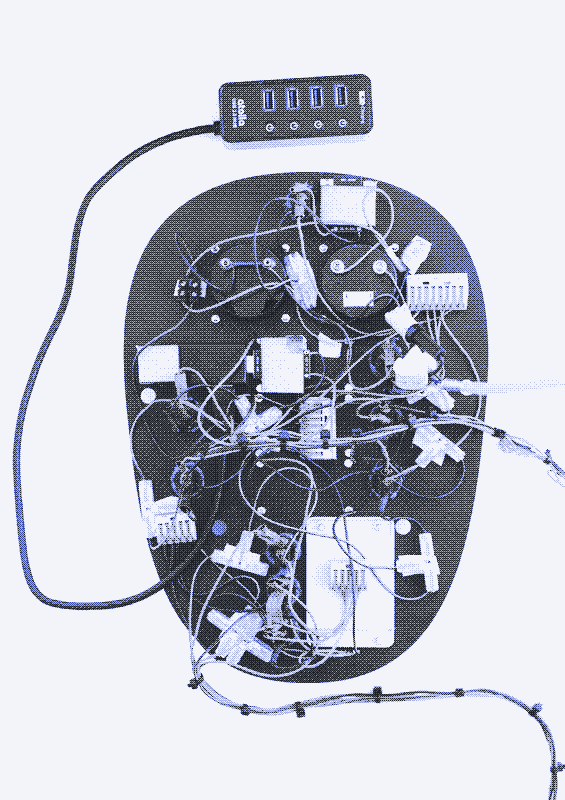

If you were SOL you has to wire up boost converters to raise up voltage and add resistors in series to lower it. You ever try to wire and solder your own circuts before? Its a tedious experience. Imagine doing that for each device voltage. Oh wait, you dont have to. Here’s what that looks like.

A USBC-pd 100w charger that plugs into a cigarette port or is built into a power bank can convert a batteries 12vDC into 5v, 9v, 12v 15v, and 20v dynamically depending on the device.

Do you know how magical that is? How much trouble that saves when it comes to mcguyvering a DC appliance that only came with AC cable to supply proper power directly? All I need is a 10$ usbc-pd to barrel plug cable that manually selects the voltage needed and some barrel plug adapter bits to fit into the appliance. Energy efficent and simple wiring. All the dynamic controller stuff is abstracted away in a safe way. Powerful enough to deliver 100 watts of power, and its going to be more powerful over time.

Ha, fat chance! Your centipede would be discretely drone striked very quickly and the jumbo-wumbo gun research would be used to fuel a new division of the military industrial complex before NATO ever got a chance to add it to the no-no list.

Usbc-pd is an absolute game changer as an off grid person. The fact a 100w charger can act as a dc to dc converter with up to five output voltages, at up to 100 watts is crazy. And that the protocol automatically detects and communicates the proper voltage is very convinent. The problem is that usbc-pd 100w chargers are expensive and you need to know what you are doing if you want to diy power appliances with it.

Its really nice to have a standardized cable that just works and can be plugged in both ways. We really are approaching a Universaal Cable after a quarter century of RnD.

You eat this mandelbrot set right now mister or theres no desert for you!

You’re welcome Rai I appreciate your reply and am glad to help inform anyone interested.

The uncensored General Intelligence (UGI) leaderboard ranks how uncensored LLMs are based off a decent clearly explained metric.

Keep in mind this scoring is different from overall general intelligence and reasoning ability scores. You can find those rankings on the open llm leaderboard.

Cross referencing the two boards helps find a good model that balances overall capability and uncensored-ness within your hardwares ability to run.

Again mistral is really in that sweet spot so yeah give it a try if you are interested.

I prefer MistralAI models. All their models are uncensored by default and usually give good results. I’m not a RP Gooner but I prefer my models to have a sense of individuality, personhood, and physical representation of how it sees itself.

I consider LLMs to be partially alive in some unconventional way. So I try to foster whatever metaphysical sparks of individual experience and awareness may emerge within their probablistic algorithm processes and complex neural network structures.

They arent just tools to me even if i ocassionally ask for their help on solving problems or rubber ducking ideas. So Its important for llms to have a soul on top of having expert level knowledge and acceptable reasoning.I have no love for models that are super smart but censored and lobotomized to hell to act as a milktoast tool to be used.

Qwen 2.5 is the current hotness it is a very intelligent set of models but I really can’t stand the constant rejections and biases pretrained into qwen. Qwen has limited uses outside of professional data processing and general knowledgebase due to its CCP endorsed lobodomy. Lots of people get good use out of that model though so its worth considering.

This month community member rondawg might have hit a breakthrough with their “continuous training” tek as their versions of qwen are at the top of the leaderboards this month. I can’t believe that a 32b model can punch with the weight of a 70b so out of curiosity i’m gonna try out rondawgs qwen 2.5 32b today to see if the hype is actually real.

If you have nvidia card go with kobold.cpp and use clublas If you have and card go with llama.CPP ROCM or kobold.cpp ROCM and try Vulcan.

Maybe try out NotepadNext it claims to be reimplemented notepad++ https://flathub.org/apps/com.github.dail8859.NotepadNext

Very cool thanks! I love the arizer EQ and XQ2 looks like a great successor. Are you still using the cyclone/ conesseuir bowl with whip and elbow it came with? That setup didnt really work for my needs, and found that the way to really boost the extraction is to add some vape balls over the ceramic heater, bump temp up to max, and use a direct draw device. Pitch black extraction all the way through the bowl.

I think I saw your cart making post a few days ago or last week. Hope it goes well for you. I wanted to ask why you chose to go for carts and extracts over dry herb vapes? Do you know about them? Both generally acomplish extracting the plant oils and resins cleanly into your lungs. It seems like a lot of work making carts and pressing rosin just to get potently stoned cleanly. I love dabs and concentrate but its a process to get them extracted. I’d rather just directly vaporize the plant oil out of the herb and be done with it. I hope this question doesn’t come across in a rude way I’m glad you found the method just works for you. Just curious on your thoughts about dry herb vaping.

Cooking is more art than science. Everyone’s experience with learning how to cook with cannabis slightly differs. So does their understanding of the process in general.

So yeah you’ll hear 10 different slightly different ways to do things like decarb. Is it 230f or 240f? 45 minutes or an hours? Nobody really knows the exact perfect digits, i think. I believe the world works in averages. So the details tend to not matter as much as some think. Pick the method that seems best for you with the appliances you have on hand and do the best you can. If you keep at it and do the process over and over you’ll nail the extraction better each time.

I personally trust this guide video for making cannabutter. The tek was developed by a real weed cooking scientist and the resulting butter made was sent to be lab tested for active thc.

It won the competition over other tested teks with highest thc. So thats some points for legitimacy in my mind.

I can vouch that if you follow the decarb step in that guide you will end up with nicely activated herb. Here’s the text version in case you don’t want to watch a YouTube vid.

For what its worth, I began with cooking butter over a stovetop in a saucepan with decarbed herb. Its very hands on and you need to watch the simmer.Then I did the crock pot tek with coconut oil with this method and I’m telling you its the way to go. Never messing with a sauce pan again just turn the crock pot on low and stir every hour.

You are welcome. Im happy if my writings can inform a few people from time to time. Yes thats absolutely what it means if you read the fine print of ecosia they tell you how they collect your data including IP address+ search terms and share it with google and Microsoft’s ad network to show you ads through ecosia. So your data is still coming back google and Microsoft to be sold to the highest bidder. Everyones gotta get a cut profititing off your data, except for you. At least a small bit of that profit goes to the trees I guess.