A lot of the reasons not to use AI is it just makes up random shit…

But even with that it’s probably more accurate than what a fucking cop would write.

What I’m worried about is cops making sure the AI says what they want (lies) and then when questioned they’ll blame the AI to escape consequences

I’ve signed a lot of forms that say something like “I certify that the information I have provided is true and accurate”. Using ChatGPT doesn’t absolve me of that. It shouldn’t for them either (but we all know they’re held to a different standard).

What is more racist, though? The average cop or the average LLM? I’d wager a guess it’s the average cop. So, it would still be a net benefit.

Devils advocate: in the case of a monthly report, often an LLM is used like “take these current statistics and update last month’s report to include them.”

As in… the LLM is not developing an opinion it’s just presenting the numbers.

Monthly reporting is usually very formulaic. There’s no scope for “I propose forming a lynch mob comprised of vigilanties”.

This isn’t about them using them for monthly reports, this is about them using LLMs for individual incident reports

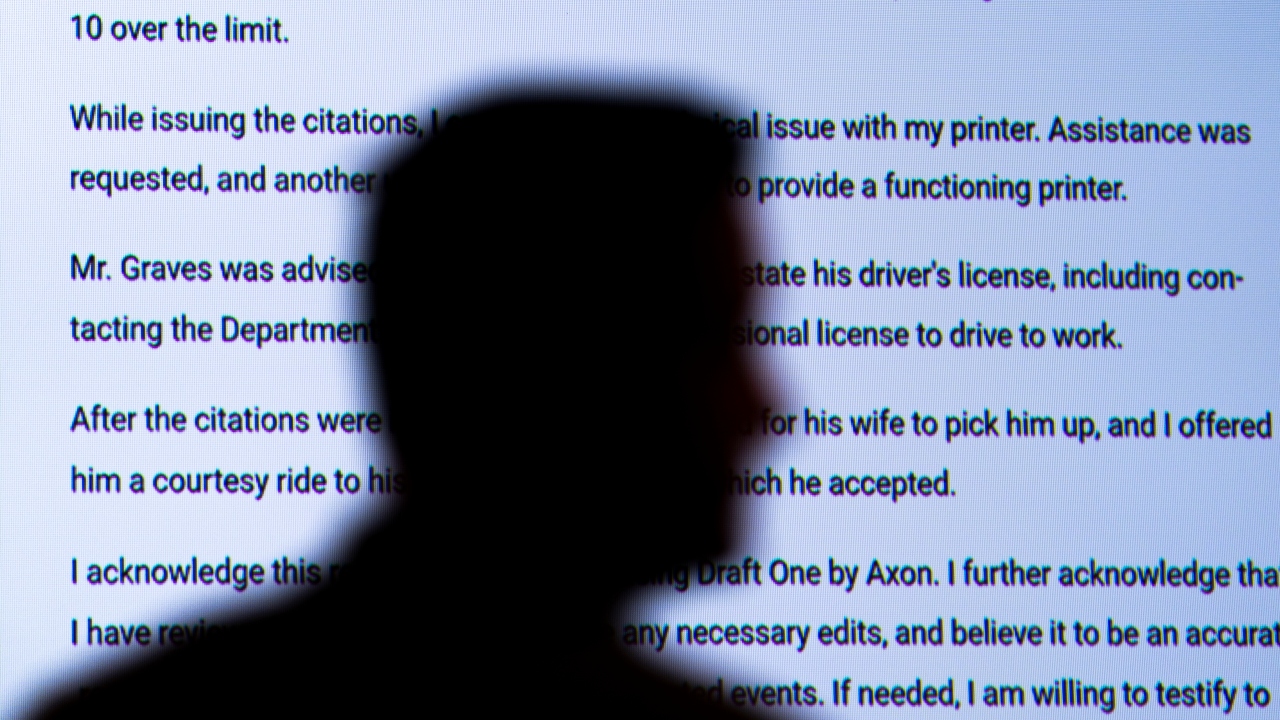

Pulling from all the sounds and radio chatter picked up by the microphone attached to Gilbert’s body camera, the AI tool churned out a report in eight seconds. …

Oklahoma City’s police department is one of a handful to experiment with AI chatbots to produce the first drafts of incident reports. Police officers who’ve tried it are enthused about the time-saving technology, while some prosecutors, police watchdogs and legal scholars have concerns about how it could alter a fundamental document in the criminal justice system that plays a role in who gets prosecuted or imprisoned.

“take these current statistics and update last month’s report to include them.”

That is literally the worst use case for an LLM. Something a simple script could do, but it is hard dry data the LLM is free to hallucinate with and people are lazy to check over manually.

Also, LLMs can’t math.

TheGrio - News Source Context (Click to view Full Report)

Information for TheGrio:

MBFC: Left - Credibility: High - Factual Reporting: Mostly Factual - United States of America

Wikipedia about this sourceInternet Archive - News Source Context (Click to view Full Report)

Information for Internet Archive:

MBFC: Left-Center - Credibility: High - Factual Reporting: Mostly Factual - United States of America

Wikipedia about this sourceSearch topics on Ground.News

https://web.archive.org/web/20240828120602/https://thegrio.com/2024/08/27/police-officers-are-starting-to-use-ai-chatbots-to-write-crime-reports-despite-concerns-over-racial-bias-in-ai-technology/

https://thegrio.com/2024/08/27/police-officers-are-starting-to-use-ai-chatbots-to-write-crime-reports-despite-concerns-over-racial-bias-in-ai-technology/the AI doesn’t have fucking racial bias, humanity and the content they produce they fit into the AI has a racist bias.

No need to split hairs here. The product that people use and call “AI” is what is relevant.

So your logic is that a child can’t be racist if the parents are racist?

No, that a knife isn’t racist when its owner goes on a muslim stabbing spree.

I never saw a knife capable of writing a police report, or doing anything else we normally attribute to only brains.

The problem with AI is that they seem to become racist by “choosing” racist stigma over the alternative, despite racist stigma is NOT the majority of the info they are based on.