This might cheer you up: https://visualstudiomagazine.com/articles/2024/01/25/copilot-research.aspx

I don’t think we have anything to worry about just yet. LLMs are nothing but well-trained parrots. They can’t analyse problems or have intuitions about what will work for your particular situation. They’ll either give you something general copied and pasted from elsewhere or spin you a yarn that sounds plausible but doesn’t stand up to scrutiny.

Getting an AI to produce functional large-scale software requires someone to explain precisely the problem domain: each requirement, business rule, edge case, etc. At which point that person is basically a developer, because I’ve never met a project manager who thinks that granularly.

They could be good for generating boilerplate, inserting well-known algorithms, generating models from metadata, that sort of grunt work. I certainly wouldn’t trust them with business logic.

What killed No Time to Die for me were the nanobots being declared unsolvable in the same movie that explicitly shows EMPs being used. I thought for sure that was a Chekhov’s gun being set up but no, just bad writing.

Yesssssss. I just got done splitting up a 3000-line mess of React code into a handful of simple, reusable components. Better than sex.

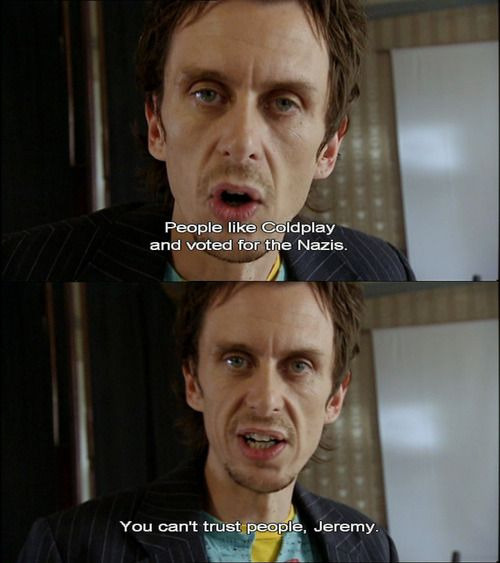

You’ll never live like common people. You’ll never do whatever common people do.